Thinking, Fast and Slow

Thinking, Fast and Slow argues that our minds run on two systems: a fast, intuitive, error‑prone one and a slow, deliberate, effortful one, and most cognitive mistakes come from trusting the fast system too much.

Thinking, Fast and Slow argues that our minds run on two systems: a fast, intuitive, error‑prone one and a slow, deliberate, effortful one, and most cognitive mistakes come from trusting the fast system too much.

Who this book is for / who it is not for

If you want to understand why your decisions so often drift from what you “know” is rational, this book is worth your time. Daniel Kahneman gives you a vocabulary and a set of mental pictures for the glitches in judgment that shape everything from daily choices to public policy.

It is especially useful if you make repeated decisions under uncertainty: investors, product managers, founders, negotiators, leaders deciding where to place scarce time and money. It also fits anyone building a personal system around values and goals, since it exposes how easily those get bent by short term emotion and noise. Read it alongside pieces like Decision Fatigue and How to Reduce It to design buffers around your weaker moments.

If you want a light, motivational read, this will feel dense. If you already work deep in behavioral science, much of the material will be familiar, though still valuable as a grand tour of the field.

Two minds in one brain: why the split matters

Kahneman’s central image is of two “systems.” System 1 is fast, automatic, effortless. It handles reading facial expressions, finishing common phrases, driving on an empty road, and jumping away from a snake-shaped stick. System 2 is slow, deliberate, and taxing. It solves 17 × 24, fills out tax forms, compares mortgage offers, and keeps your temper during an argument.

The trick is that System 1 is always on and constantly produces impressions, intuitions, and stories. System 2 is lazy. It mostly endorses what System 1 offers, only engaging fully when something feels surprising or when a problem explicitly demands calculation.

Kahneman illustrates this with the famous bat-and-ball puzzle: you are told the total price and how much more the bat costs than the ball, and asked to find the ball’s price. A quick, appealing number springs to mind for most people. Slower checking reveals that first answer is wrong. Even students at elite universities overwhelmingly give the wrong answer when rushed or distracted. The point is not arithmetic skill; it is how readily we trust the first plausible story that pops into mind.

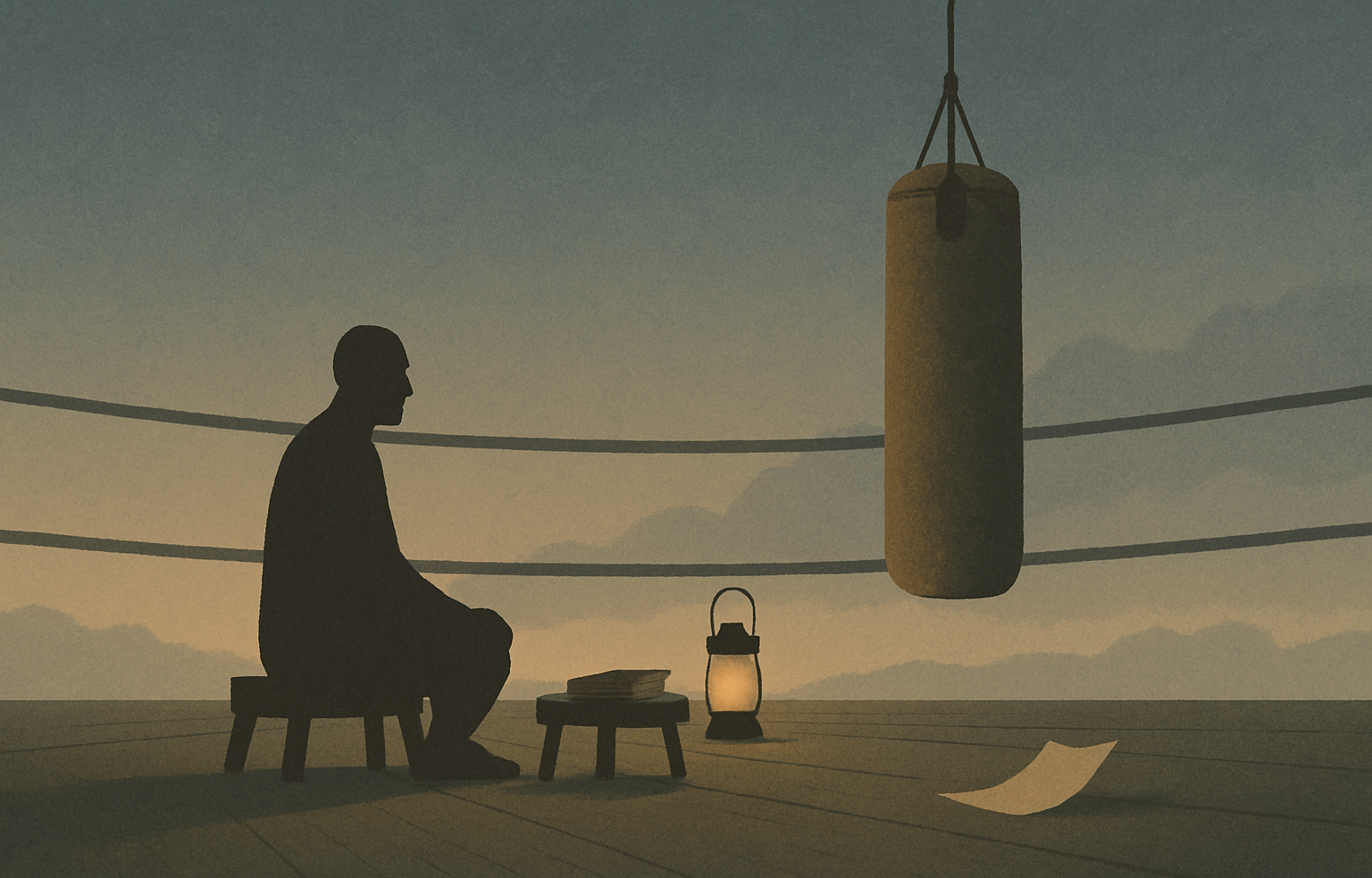

Understanding this split helps you see your own thinking as layered. When you feel absolutely certain in a snap judgment, that is often System 1 speaking. The quality of your life’s big decisions depends on noticing when to invite System 2 into the room, even when it feels uncomfortable and slow.

Heuristics and biases: shortcuts that quietly mislead

Kahneman spends much of the book cataloging the systematic mistakes our fast thinking makes. These are not random errors that cancel out. They are predictable patterns.

One of his clearest examples is the availability heuristic. We judge frequency and risk by what easily comes to mind. After watching news reports about plane crashes, people overestimate the danger of flying and underestimate car accidents, which kill far more people but are less vividly reported. In one experiment, subjects heard a list of names containing more famous women than men, but more male names overall. When asked whether there were more men or women in the list, most said women, because the famous names were easier to recall.

Another pattern is anchoring. If you spin a wheel of fortune that randomly lands on 10 or 65, and then you are asked whether the percentage of African countries in the United Nations is higher or lower than that number, your final guess clings to that arbitrary anchor. Those who saw 10 give lower estimates than those who saw 65. Even judges, experts in their domain, gave longer hypothetical sentences after being exposed to higher random numbers.

Then there is loss aversion. Losses hurt more than equal gains please us. In a classic experiment, participants were offered a coin flip: lose 100 dollars on tails, gain 150 dollars on heads. Many refused, even though the expected value is positive. The pain of losing loomed larger than the satisfaction of gaining. This same pattern shows up in wage negotiations, investment behavior, and even how we frame habits: giving up dessert feels worse than the equivalent pleasure of eating fruit feels good.

Once you see these shortcuts, it becomes obvious how they shape goals, careers, and relationships. They sway which risks you avoid, which opportunities you ignore, and which conflicts you escalate.

The stories we tell: coherence over accuracy

Kahneman argues that System 1 is a storyteller. It craves coherence, not accuracy. It takes scattered facts, guesses about causes, and builds a neat narrative that feels right, even when evidence is thin.

The Linda problem is the classic demonstration. Participants read a vivid description of a socially engaged philosophy graduate, then are asked which description of her is more probable: one that simply names her occupation or one that adds a politically aligned label on top of that occupation. A large majority choose the more detailed option, even though any extra condition must make it less probable. The added label fits the story, so it feels more true.

This narrative hunger also feeds hindsight bias. After an event occurs, we see it as having been obvious. Kahneman recalls how people quickly rewrite their memories of prior confidence about election outcomes or business decisions. Once you know the ending, you forget how uncertain the situation actually felt at the time.

In everyday life, this pushes us to overexplain our own successes and failures. We credit insight where there was luck, and we invent stable traits to justify one-off behaviors. That can distort how we set goals and interpret progress. A failed project becomes a story about personal inadequacy, rather than a mix of timing, constraints, and partial mistakes.

Training yourself to question the first satisfying story, and to ask what information is missing, is one of the quiet disciplines behind better judgment. It aligns closely with practices like clarifying core values and vision, since those give you a more stable frame than whatever story your mood generates in the moment.

Noise, overconfidence, and the limits of expertise

Kahneman is unsparing about how overconfident we are in our own judgments, including experts. We do not just make biased errors. We are noisy. Two doctors reading the same scan, or two hiring managers reading the same resume, can reach strikingly different conclusions, even with identical information.

In one memorable example, he and collaborators studied the predictions made by financial analysts and seasoned executives. Their forecasts of future earnings or market movements were barely better than chance, yet they still felt strong confidence in their intuitions. The failure was not just prediction error but a blindness to how uncertain the environment truly was.

Kahneman contrasts experienced intuition in stable domains, like chess, with intuition in noisy domains, like stock picking. Chess masters see patterns because the underlying environment has clear rules and lots of repetition. A firefighter who senses that a building is about to collapse has similar grounded intuition, built on many cycles of feedback. By contrast, a portfolio manager working in a volatile market rarely gets clean, repeated feedback for any specific pattern.

For personal decision making, this suggests two moves. First, treat confidence as a feeling, not a guarantee of accuracy. Second, build simple rules and external structures to compensate for noise. Predefined checklists for hiring, rules for when to sell an investment, or scheduled reviews of goals reduce the influence of your mood and the story of the day. This pairs well with practices like The Power of Goal Setting: How to Set and Achieve Your Goals, which emphasize repeating, measurable structures over hunches.

The honest caveat

Thinking, Fast and Slow is foundational, but it is not scripture. Some of the studies described, including classic priming experiments, have faced replication failures in the broader psychology “replication crisis.” Effects where subtle cues seem to change behavior, such as words related to old age making people walk more slowly, have not always held up under stricter methods.

Kahneman also tends to present the System 1 / System 2 model as a clean architecture, when in reality brain processes are far more intertwined. The two-system picture is a helpful metaphor; the book leans heavily on lab experiments with small samples and artificial tasks. Translating those to complex real-world settings requires caution.

For a self development reader, the risk is overapplying the vocabulary of biases to every decision, or using it to pathologize normal emotions. The value is in awareness and gentle skepticism, not self blame for having a human brain.

Where to start

This is a long, dense book. You do not need to read it straight through. Start with Part I, especially the chapters introducing the two systems and the bat-and-ball style puzzles. Then move to the sections on heuristics such as availability, anchoring, and loss aversion in Parts II and IV, which are closest to daily life decisions.

If time is tight, focus on the chapters on overconfidence, the illusion of understanding, and the planning fallacy. These sections alone will change how you think about your own forecasts and goals. The later technical chapters on prospect theory are valuable, but you can treat them as a second pass once the earlier material has settled.

To decide better, you need to see how your own mind tricks you long before anyone else does.

“Nothing in life is as important as you think it is while you are thinking about it.” ― Daniel Kahneman